Postman Load Testing: Can You Do It?

Postman is one of the most widely used API tools in modern engineering teams. Developers use it daily to build requests, organize collections, test endpoints, and share API workflows. Because it sits so close to everyday API work, a natural question comes up again and again: can Postman do load testing too?

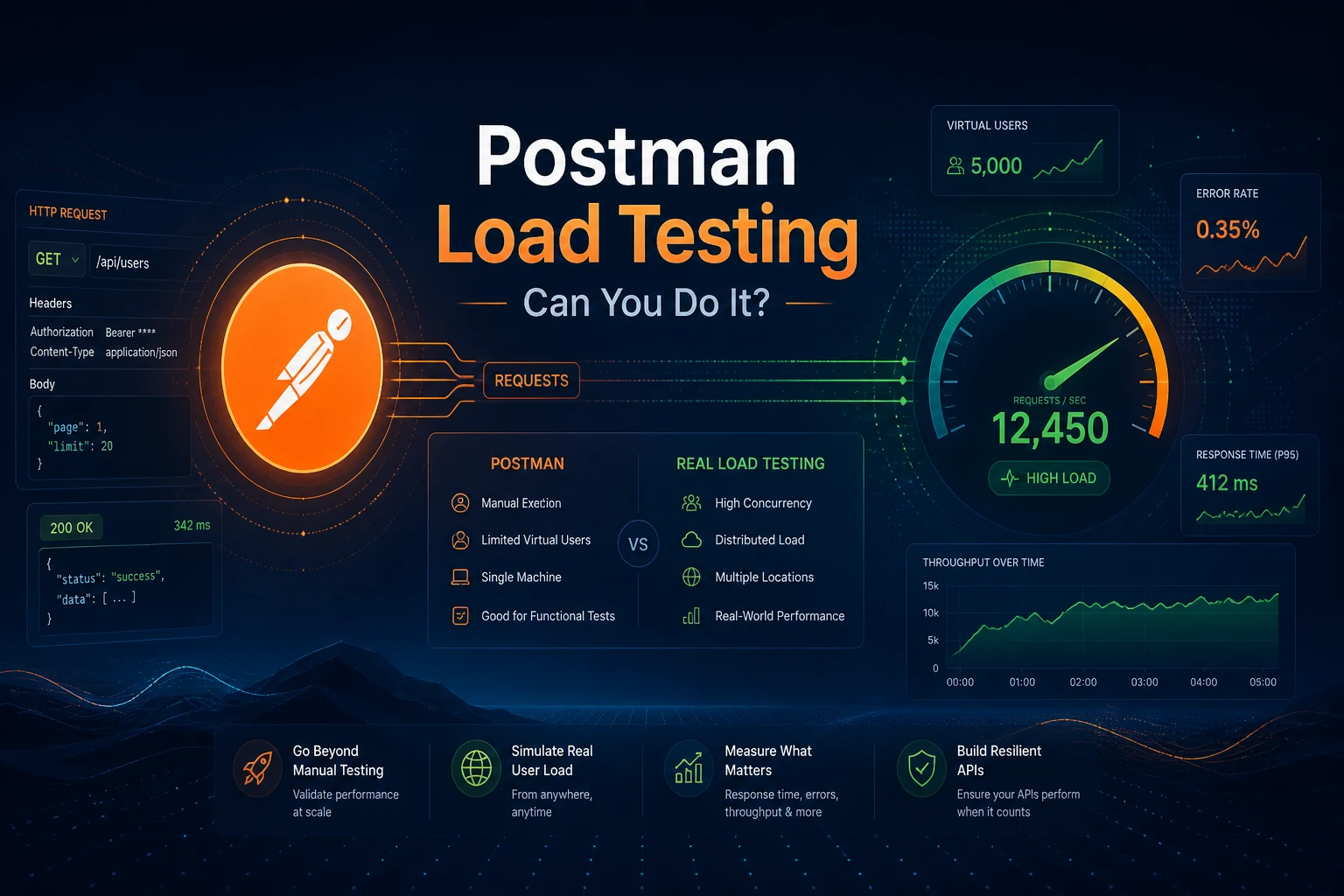

The answer needs a little nuance. Postman can absolutely help you prepare for load testing, and parts of the Postman ecosystem can automate repeated API checks. But if your goal is to simulate real concurrency, validate performance under load, and make operational decisions, Postman is not the tool I would trust for the job.

Postman is great for API development and functional testing. It is not a true load testing platform. Use it to validate request correctness, then move to a dedicated load testing workflow when you need concurrency, realistic traffic, and meaningful performance metrics.

Postman is strong at

- Building and organizing API requests

- Functional checks and assertions

- Environment management

- Developer and QA collaboration

Postman is weak at

- High concurrency simulation

- Realistic load modeling

- Performance metrics and thresholds

- Repeatable large-scale load generation

| Need | Postman | Dedicated load testing |

|---|---|---|

| Validate endpoint correctness | Excellent | Possible, but not the main job |

| Repeat requests in sequence | Good | Good |

| Simulate high concurrency | Limited | Core capability |

| Track p95/p99 latency | Limited | Core capability |

| Production-like traffic modeling | Weak | Core capability |

This article is written for teams that already use Postman in API development and are trying to decide where the boundary is between functional API checks and real load testing. The practical recommendation is based on a common engineering pattern: keep Postman for request correctness, smoke checks, and team collaboration, then move the same API scenario into a load testing workflow when the question becomes concurrency, latency under load, or release confidence.

The goal is not to argue against Postman. The goal is to avoid using the wrong measurement tool for a performance decision.

What Postman is built for

Postman is excellent at API development and functional validation. Teams use it to create requests, organize collections, attach environments, inspect responses, write assertions, document endpoints, and collaborate on request workflows. That is why it is so common in backend and QA teams. It reduces friction when you need to answer questions like: does this endpoint work, is authentication correct, do the headers look right, and did the payload come back in the shape we expected?

Those are important tasks, but they are not the same as asking whether an API can handle concurrency, sustained traffic, or bursty production behavior. Functional tools answer whether a system works. Load testing tools answer how a system behaves when many users or systems hit it at the same time. That distinction is where many teams get confused.

So before debating whether Postman can do load testing, it helps to clarify what job it was designed for. Postman is built for request creation, workflow validation, and API collaboration first. It is not primarily built to simulate thousands of users, coordinate distributed generators, or provide rigorous performance analysis. That does not make Postman weak. It just means it is optimized for a different problem.

Can you do load testing in Postman?

The short answer is: not really, at least not in the way most engineers mean it. You can use Postman and Newman to send repeated requests, loop through collections, and automate test runs. For very small experiments, that may look a little like load generation. But those workflows are not a substitute for genuine load testing.

A real load test needs control over concurrency, ramp-up, duration, thresholds, and repeatable scenario design. It needs to simulate traffic at enough scale to reveal bottlenecks. It usually needs reporting that separates latency percentiles, error rates, throughput, and system stability over time. Postman does not natively give you the depth or scale that serious performance validation requires.

That is why the right framing is not 'Can Postman send more than one request?' It absolutely can. The right framing is 'Can Postman model real production load well enough to trust the result?' For most teams and most systems, the answer is no.

What Postman can still help you validate before a load test

Even though Postman is not a dedicated load testing platform, it remains useful in the performance workflow. In practice, many teams start in Postman because it is already where the endpoint definitions live. They use it to validate that the base request works, that the authentication flow is correct, that example payloads are realistic, and that failure handling behaves as expected before a heavier test begins.

That matters more than people think. A poorly prepared load test often fails for the wrong reasons: wrong tokens, broken request bodies, stale IDs, or incorrect headers. Postman is a good place to eliminate those basic mistakes. You can create representative request examples, collect environment variables, and confirm that your API behaves correctly with realistic input data.

In other words, Postman is useful before load testing, adjacent to load testing, and supportive of load testing. It just should not be mistaken for the load testing phase itself.

Why Postman is not a real load testing tool

There are four main reasons. First, concurrency is limited and awkward compared with purpose-built performance tools. Load testing is fundamentally about many things happening at once. If the tool does not make concurrency a first-class concept, you are already forcing it beyond its comfort zone.

Second, Postman is not built to represent production traffic at meaningful scale. Mature load testing requires scenario control: ramping up users, keeping them active for a period, shaping request mixes, and often coordinating traffic across more than one machine or region. Postman is much better at executing request collections than at simulating a real user population.

Third, reporting is not built around performance analysis. Functional test tooling usually tells you whether a request succeeded. Performance tooling has to go further: p95 and p99 latency, error rate under concurrency, throughput trends, saturation points, and comparisons between runs. That is a different style of observability.

Fourth, repeatability suffers when teams try to stretch a functional tool into a performance tool. Once you start hand-building workarounds for loops, parallelism, and monitoring, you may be spending time on machinery instead of learning anything useful about the API.

Collection Runner, Newman, and Monitors: useful, but not load testing

This is where confusion usually starts. Postman Collection Runner can execute a collection multiple times. Newman lets you run Postman collections from the command line and integrate them into scripts or CI pipelines. Monitors help you check that requests continue to behave correctly over time. All three are useful, and all three are often mentioned when people ask whether Postman can do load testing.

But none of them turns Postman into a serious load generator. Collection Runner is good for repeated functional runs. Newman is good for automation. Monitors are good for lightweight checks and uptime-style validation. Those are adjacent capabilities, not a replacement for real concurrency testing.

If your goal is to answer, 'Will this API remain fast and stable under 500, 5,000, or 50,000 virtual users?' then Runner, Newman, and Monitors are the wrong instruments. They can help you prepare, but they are not the measurement system you should rely on for performance decisions.

# Example: Newman is useful for automation, not real load testing

newman run MyCollection.json \

--environment staging.json \

--reporters cli,junitWhat a real load test actually needs

A trustworthy load test needs a few non-negotiable things: concurrency control, scenario realism, clear thresholds, good metrics, and repeatability. Concurrency control means you can specify how many virtual users or requests per second should be active, and how that load ramps over time. Scenario realism means the workload reflects actual behavior instead of one toy request repeated forever.

Good load tests also need metrics that help teams make decisions. That includes latency percentiles, not just averages; throughput, not just success/failure; and error breakdowns that show whether the system degraded cleanly or started failing unpredictably. If you need a refresher on why percentiles matter, the p95 vs p99 latency guide is worth reading.

Finally, the test needs to be repeatable. If you cannot rerun the same scenario after a code change or infrastructure adjustment and compare results cleanly, the output is much less useful. This is one reason teams outgrow improvised Postman-based approaches quickly.

When Postman is enough and when it is not

Postman is enough when the question is basic and functional. Can the endpoint respond correctly? Do the headers work? Did auth break? Is the request body valid? Can the API team share a repeatable collection with engineering or QA? For those questions, Postman is a very good tool.

It is not enough when the question becomes operational or performance-oriented. Can the service absorb a marketing spike? What happens when many users log in at the same time? Does the API remain stable during a ramp from 50 to 1,000 concurrent users? Where does p95 latency go under stress? Those are load testing questions, and they require tools designed for that type of answer.

This boundary is important because it prevents teams from gaining false confidence. An API that passes every Postman collection can still fail badly in production if the issue is concurrency, thread contention, connection pooling, caching behavior, or downstream dependency saturation.

A practical workflow: use Postman first, then move to load testing

A strong workflow is simple. Start in Postman to confirm that the endpoint, payload, and environment configuration are correct. Use it to build realistic example requests and validate basic assertions. Then move the scenario into a real load testing tool or managed workflow when you want to measure performance.

This division of labor works well because it uses each tool for what it does best. Postman helps you remove trivial mistakes early. Load testing then measures stability and performance under concurrency. If your team needs a broader mental model, the guide on how to load test an API and the LoadTester homepage together give a good foundation for this workflow.

In practice, this means you should not feel bad about starting with Postman. You should just avoid stopping there when the real question is load.

Postman vs real load testing tools

The easiest way to understand the difference is to compare intent. Postman is designed to help a developer or tester work with APIs efficiently. Real load testing tools are designed to help teams simulate production traffic and interpret system behavior under pressure.

That difference affects everything: the scripting model, the metrics, the reporting, the scale, and the workflow. In Postman, success usually means 'the request ran and the assertion passed.' In load testing, success may still include passed assertions, but it also means 'the system stayed within threshold while concurrency increased and error rates remained controlled.'

This is why teams that care about release confidence, capacity planning, or regression detection usually move toward specialized tooling. The HTTP load testing tool checklist is a useful reference if you are evaluating what that tooling should include.

If the question begins with “does the request work?”, Postman is often the right place to start. If the question begins with “what happens when many users hit it at once?”, you have crossed into load testing.

Better alternatives if you need real load testing

If you genuinely need load testing, use a tool that is built for it. That could be a scriptable open-source option, a cloud platform, or a managed workflow, depending on your team. The key is not whether the tool is fashionable. The key is whether it supports concurrency, realistic traffic models, strong metrics, and repeatable execution.

Many teams compare options such as k6, JMeter, Gatling, Locust, or a managed platform. Which one fits best depends on how much scripting you want, whether you need team workflows, and how you prefer to run tests. If you are evaluating options, the broader best load testing tools guide can help you map the landscape.

The important point for this article is simpler: if your goal is real performance validation, skip the temptation to force Postman into the role. Use the right category of tool.

Common mistakes teams make with Postman and performance testing

The first mistake is mistaking repeated requests for meaningful load. Sending a request in a loop does not automatically simulate user concurrency. The second mistake is measuring only elapsed time for a small run and treating that as a capacity signal. That kind of measurement can be wildly misleading.

The third mistake is assuming a passing collection proves the API is production-ready. Functional correctness is necessary, but it is not a proxy for resilience. The fourth mistake is ignoring the rest of the system. Real load testing often exposes issues in databases, caches, message brokers, or third-party services that Postman will never reveal in normal collection runs.

The fifth mistake is building a fragile custom workaround instead of moving to a real tool. If your team is spending time hacking together pseudo-load behavior in Postman, that effort is usually a sign that you have already outgrown the approach.

Should Postman have a place in CI/CD?

Yes, but mainly as part of functional validation. Postman collections and Newman runs can be very helpful in CI/CD for smoke checks, schema expectations, and contract-style validation. That is a strong use case. It is just different from performance testing.

For load testing in CI/CD, the pattern is different. Run light functional checks with Postman or Newman, then run lightweight performance gates or scheduled load tests with a tool built for that job. The load testing in CI/CD and continuous load testing guides explain why this layered approach works well in practice.

Using both categories together is often the most mature setup. Postman validates correctness. Load testing validates operational behavior. One complements the other; neither fully replaces the other.

Final verdict: can Postman handle load testing?

Postman is a strong API development tool, and it absolutely deserves a place in many engineering workflows. But if the question is whether it can serve as a serious load testing platform, the answer is no for most real-world needs.

Use Postman to build, inspect, document, and validate requests. Use it to remove functional uncertainty before performance work begins. Then switch to a true load testing approach when you need to understand concurrency, throughput, latency under load, and system behavior at scale.

That is the honest and practical answer. Postman is good at what it was built for. Load testing just is not the center of that design.

How to explain the difference to your team

If your team keeps blurring the line between Postman and load testing, the simplest explanation is this: Postman helps you prove that an API request is correct, while load testing helps you prove that the API remains healthy when many requests arrive under real operating conditions. One focuses on correctness first. The other focuses on behavior under stress and concurrency.

This distinction is useful in planning conversations because it turns a vague debate into a workflow decision. If the release risk is primarily “did we break the endpoint?”, use Postman and Newman checks. If the release risk is “will the API stay fast and stable under real traffic?”, run a proper load test. Once teams see those as different questions, tool choice becomes much less emotional and much more practical.

I have found that this framing also improves stakeholder communication. Product or leadership teams do not usually care whether you used Postman or another tool. They care whether the result is trustworthy. Explaining that Postman verifies API correctness while a real load testing workflow verifies production readiness makes the boundary easy to understand.

Postman-to-load-testing migration checklist

Use this checklist when a request or collection is ready in Postman and you want to turn it into a real performance scenario.

| Step | What to check | Why it matters |

|---|---|---|

| 1. Confirm the request | Method, URL, headers, body, and auth work in Postman | A load test should not fail because of a broken request |

| 2. Identify dynamic data | Tokens, IDs, timestamps, user-specific payloads | Static data can create unrealistic cache behavior or duplicate errors |

| 3. Define user behavior | Which requests run, in what order, and how often | Real load testing models workflows, not just endpoints |

| 4. Pick load shape | Ramp-up, steady duration, peak, and cool-down | Concurrency over time reveals bottlenecks better than a loop |

| 5. Set thresholds | p95 latency, error rate, throughput, and availability | Thresholds turn a test into a decision |

| 6. Compare runs | Baseline vs new release or infrastructure change | Regression detection needs repeatable evidence |

Newman example, and why it is not enough

A typical Newman command is useful for CI automation:

newman run postman_collection.json \

--environment staging.postman_environment.json \

--reporters cli,junitThis can answer: did the collection run, did the assertions pass, and did the API behave correctly for the scripted flow? That is valuable, especially before a release.

But a real load test asks different questions:

- What happens when 200, 1,000, or 5,000 users run similar flows at the same time?

- Does p95 latency stay inside the release threshold?

- Which endpoint fails first as concurrency rises?

- Does the database, cache, queue, or third-party dependency saturate before the API server?

- Can the same scenario be rerun next week and compared against the previous result?

That is the gap. Newman is a strong automation runner for Postman collections, but it is not a complete performance testing system.

Decision table: use Postman or load testing?

| Question | Use Postman? | Use load testing? |

|---|---|---|

| Does the endpoint return the correct response? | Yes | Optional |

| Did authentication or headers break? | Yes | No |

| Can we run API smoke checks in CI? | Yes, often with Newman | Optional |

| Can the API handle peak traffic? | No | Yes |

| What is p95 latency under concurrency? | No | Yes |

| Did this release introduce a performance regression? | Not reliably | Yes |

Frequently asked questions

Can Postman be used for load testing?

Only in a very limited sense. Postman can repeat requests and automate collections, but it is not designed for serious concurrency simulation, distributed load generation, or rich performance analysis. For most real load testing needs, use a dedicated load testing tool.

Is Newman a load testing tool?

No. Newman is a command-line runner for Postman collections. It is useful for automation and CI checks, but it is not a purpose-built load testing platform.

What should I use instead of Postman for load testing?

Use a tool or platform designed for load testing. The right choice depends on your team and workflow, but the important part is support for concurrency, realistic scenarios, strong metrics, and repeatable execution.

Should I still keep Postman in my workflow?

Yes. Postman is excellent for API development and functional validation. It works well as the step before load testing, just not as the final answer when performance is the real question.

These are the official docs, specs, or operational references most relevant to this topic.